AI is often vilified, with myths shaping public perception

more than facts. Let us dispel four common myths about AI and present a more

balanced view of its potential and limitations.

1. AI Is Environmentally Costly

One of the most persistent claims about AI is that its use

requires massive amounts of energy and water, making it unsustainable in the

long run. While it is true that training large AI models can be

energy-intensive, this perspective needs context. Consider the environmental

cost of daily activities such as driving a car, taking a shower, or watching

hours of television. AI, on a per-minute basis, is significantly less taxing

than these routine activities.

More importantly, AI is becoming a key driver in creating

energy-efficient solutions. From optimizing power grids to improving logistics

for reduced fuel consumption, AI has a role in mitigating the very problems it

is accused of exacerbating. Furthermore, advancements in hardware and

algorithms continually reduce the energy demands of AI systems, making them

more sustainable over time.

In the end, it is a question of balance. The environmental

cost of AI exists, but the benefits—whether in terms of solving climate

challenges or driving efficiencies across industries—often outweigh the

negatives.

2. AI Presents High Risks to Cybersecurity and Privacy

Another major concern is that AI poses a unique threat to

cybersecurity and privacy. Yet there is little evidence to suggest that AI

introduces any new vulnerabilities that were not already present in our

existing digital infrastructure. To date, there has not been a single instance

of data theft directly linked to AI models like ChatGPT or other large language

models (LLMs).

In fact, AI can enhance security. It helps in detecting

anomalies and intrusions faster than traditional software, potentially catching

cyberattacks in their earliest stages. Privacy risks do exist, but they are no

different from the risks inherent in any technology that handles large amounts

of data. Regulations and ethical guidelines are catching up, ensuring AI

applications remain as secure as other systems we rely on.

It is time to focus on the tangible benefits AI

provides—such as faster detection of fraud or the ability to sift through vast

amounts of data to prevent attacks—rather than the hypothetical risks. The fear

of AI compromising our security is largely unfounded.

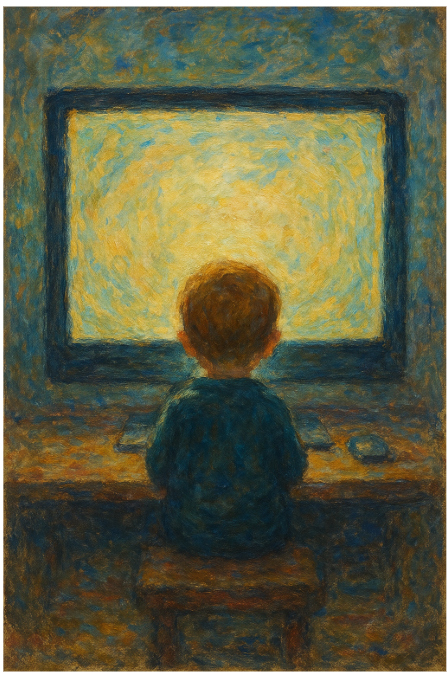

3. Using AI to Create Content Is Dishonest

The argument that AI use, especially in education, is a form

of cheating reflects a misunderstanding of technology’s role as a tool. It is

no more dishonest than using a calculator for math or employing a spell-checker

for writing. AI enhances human capacity by offering assistance, but it does not

replace critical thinking, creativity, or understanding.

History is full of examples of backlash against new

technologies. Consider the cultural resistance to firearms in Europe during the

late Middle Ages. Guns were viewed as dishonorable because they undermined

traditional concepts of warfare and chivalry, allowing common soldiers to

defeat skilled knights. This resistance did not last long, however, as

societies learned to adapt to the new tools, and guns ultimately became an

accepted part of warfare.

Similarly, AI is viewed with suspicion today, but as we

better integrate it into education, the conversation will shift. The knights of

intellectual labor are being defeated by peasants with better weapons. AI can

help students better understand complex topics, offer personalized feedback,

and enhance learning. The key is to see AI as a supplement to education, not a

replacement for it.

4. AI Is Inaccurate and Unreliable

Critics often argue that AI models, including tools like

ChatGPT, are highly inaccurate and unreliable. However, empirical evidence

paints a different picture. While no AI is perfect, the accuracy of models like

ChatGPT or Claude when tested on general undergraduate knowledge is remarkably

high—often in the range of 85-90%. For comparison, the average human memory

recall rate is far lower, and experts across fields frequently rely on tools

and references to supplement their knowledge.

AI continues to improve as models are fine-tuned with more

data and better training techniques. While early versions may have struggled

with certain tasks, the current generation of AI models is much more robust. As

with any tool, the key lies in how it is used. AI works best when integrated

with human oversight, where its ability to process vast amounts of information

complements our capacity for judgment. AI’s reliability is not perfect, but it

is far from the "uncontrollable chaos" some claim it to be.

***

AI, like any revolutionary technology, invites both

excitement and fear. Many of the concerns people have, however, are rooted in

myth rather than fact. When we consider the evidence, it becomes clear that the

benefits of AI—whether in energy efficiency, cybersecurity, education, or

knowledge accuracy—far outweigh its potential downsides. The challenge now is

not to vilify AI but to understand its limitations and maximize its strengths.