Looking at this MIT study reveals a fundamental design flaw that undermines its conclusions about AI and student engagement. The researchers measured participants writing formulaic SAT essay prompts - precisely the kind of mechanistic, template-driven assignments that have plagued education for decades.

These SAT prompts follow predictable patterns: "Should people who are more fortunate have more moral obligation to help others?" Students recognize this format immediately. They know teachers expect introductions that restate the prompt, three supporting paragraphs, and conclusions that circle back. These assignments reward compliance over creativity.

When students used ChatGPT for such tasks, they made rational choices. Why expend mental energy on assignments designed to test formatting rather than thinking? The AI could generate expected academic language and hit word counts. Students preserved cognitive resources for work that actually mattered.

The study inadvertently exposes the poverty of traditional academic writing instruction. When researchers found that 83.3% of LLM users "failed to provide a correct quotation" from their own essays, they interpreted this as cognitive impairment. A more accurate reading suggests these students recognized the meaninglessness of the exercise. They had not internalized content they never owned.

The EEG data supports this interpretation. Brain activity decreased in LLM users because the task required less genuine cognitive engagement. The AI handled mechanical aspects of essay construction, leaving little for human minds to contribute. This reflects the limitations of the assignment, not the tool.

The study's most damning evidence against itself lies in teacher evaluations. Human educators consistently identified AI-assisted essays by their "homogeneous" structure and "conventional" approaches. These teachers recognized what researchers missed: when you ask for mediocrity and provide tools to automate it, you get mechanized responses.

The real experiment was not about AI versus human capability. It was about the difference between authentic intellectual work and academic busy work. Students given meaningful tasks that connect to their experiences and require genuine synthesis behave differently than those asked to produce essays for assessment purposes.

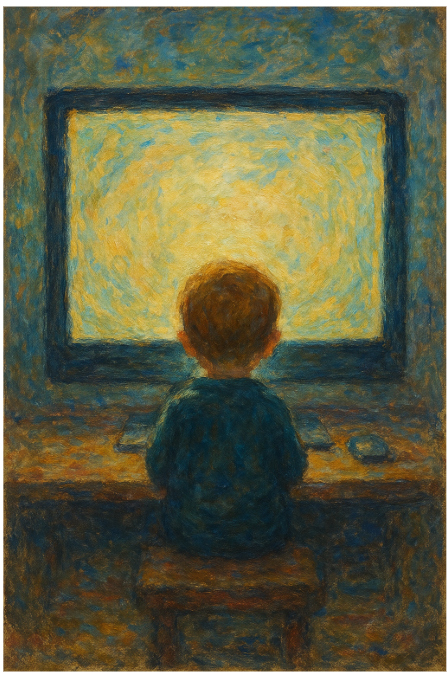

True engagement emerges when students encounter problems that matter to them. Students spend hours exploring complex philosophical questions with AI when assignments invite genuine inquiry. They argue with the AI, test perspectives, use it to access ideas beyond their knowledge. The same students who mechanically generate SAT responses become deeply engaged when intellectual stakes feel real.

The study reveals an important truth, but not the one its authors intended. It demonstrates that students distinguish between meaningful work and make-work. When presented with assignments testing their ability to reproduce expected formats, they seek efficient solutions. When challenged with authentic intellectual problems, they bring full cognitive resources to bear.

The researchers worried about "neural efficiency adaptation." They should celebrate this finding. It suggests students learned to allocate cognitive resources intelligently rather than wastefully.

The future of education lies not in restricting AI tools but in designing learning experiences that make productive use of them. This requires abandoning SAT-style prompts. We need assignments that are genuinely AI-hard - tasks requiring contextual understanding, ethical reasoning, and creative synthesis from human experience.

When assignments can be completed adequately by AI with minimal human oversight, they probably should be. This frees human minds for work requiring judgment, creativity, and personal investment that AI cannot provide.

The study's own data supports this. When participants with prior independent writing experience gained AI access, they showed enhanced neural activity. They used tools strategically to extend thinking rather than replace it.

Students are telling us something important when they automate routine academic tasks. They are saying their mental energy deserves better purposes. The researchers measured decreased effort on meaningless assignments and concluded AI reduces engagement. They should have asked whether assignments worthy of human intelligence would show different results.